Docker Image

A Docker image contains

- A cut-down OS

- A runtime environment (eg Node)

- Application files

- Third-party libraries

- Environment variables

Docker File

Instructions to docker to package application.

Create Dockerfile in root of application.

Specify Environment

First we need to specify our runtime environment and our operating system, which is node and Alpine Linux respectively. It looks like Alpine is sort of the “Official” distro that Docker uses for this, although there are other options.

FROM node:alpineCopy All Files

Next we need to copy all of our application files into the image. Here we are copying all files into a directory called “dockerized”.

FROM node:alpine

COPY . /dockerizedSet Working Directory

We can set a working directory so that we don’t have to type the full path on all our commands

FROM node:alpine

COPY . /dockerized

WORKDIR /dockerizedWrite Commands

Next we write the commands required to start the app. This blog for example is a gatsby site, so we would run gatsby develop. A very basic node app might run node app.js, a react app might run yarn start. It depends on your app.

FROM node:alpine

COPY . /dockerized

WORKDIR /dockerized

CMD gatsby developBuild Container

To instruct docker to build the container we go to our console, and use the build instruction with a tag -t and name the container. The period references the current directory. Docker must detect a Dockerfile, so you want to run this inside the directory with the Dockerfile.

docker build -t ncoughlin .View Available Container

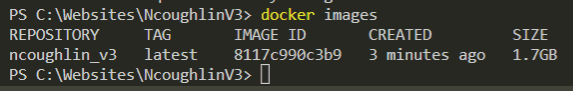

To view all the available containers on a computer run

docker imagesOr use the Docker desktop app.

Run Container

Again you can use the Desktop app, or use

docker run CONTAINER_NAMEAlthough it actually is necessary to include a couple flags. For example the -p flag to bind the ports.

docker run -p 8000:8000 CONTAINER_NAMEDocker Compose

An easier way to do this than to constantly have to re-write all these flags is to create a docker-compose.yml file that gives instructions to go along with your Dockerfile.

Then to start everything you just run docker compose up.

A compose file makes it so that you can start multiple containers at the same time, however it is also easy to start one container this way. Here are my final Dockerfile and docker-compose.yml files for this site.

FROM node:14-alpine

ENV GATSBY_TELEMETRY_DISABLED=1

EXPOSE 8000 9929 9230

# most of these are for sharp

RUN apk add --no-cache python3 py3-pip libtool nasm make gcc autoconf automake g++

RUN apk add --upgrade --no-cache vips-dev build-base --repository https://alpine.global.ssl.fastly.net/alpine/v3.10/community/

RUN npm install -g node-gyp

RUN npm install sharp

RUN npm install -g gatsby-cli@2.12.34

WORKDIR /app

#RUN chown -R node:node /app

COPY ./package.json .

RUN npm install

COPY . .

#USER node

# start development server

CMD ["gatsby", "develop", "-H", "0.0.0.0", "-p", "8000:8000"]version: '3'

services:

gatsby:

build:

context: .

dockerfile: Dockerfile

ports:

- "8000:8000"

- "9929:9929"

- "9230:9230"

volumes:

- /app/node_modules

- ./content:/app/content

- ./src:/app/src

- .:/app

environment:

- NODE_ENV=development

- GATSBY_WEBPACK_PUBLICPATH=/

- CHOKIDAR_USEPOLLING=1

Docker on Windows

When using Docker on Windows make sure that your application files are inside the WSL filesystem and not the Windows filesystem. If you don’t do this you will run into all kinds of issues. You will get permission errors. You won’t be able to get live updates working, it’s a mess.

Volumes

Volumes are useful because they make it so that we don’t have repeat steps with launching containers. For example a common practice is to put all the node modules in a volume, and then if there are not changes to your packages when you launch the container it skips the step of re-installing all the packages, which saves a lot of time.